From Boids to Documents – Part 2

Posted: July 20, 2012 Filed under: Human and Computer Interaction, Research | Tags: App, Boids, C++, HCI, Independent Elements, Publication, Research, Visualization, WinAPI 2 Comments »If you haven’t read Part 1 head there!.

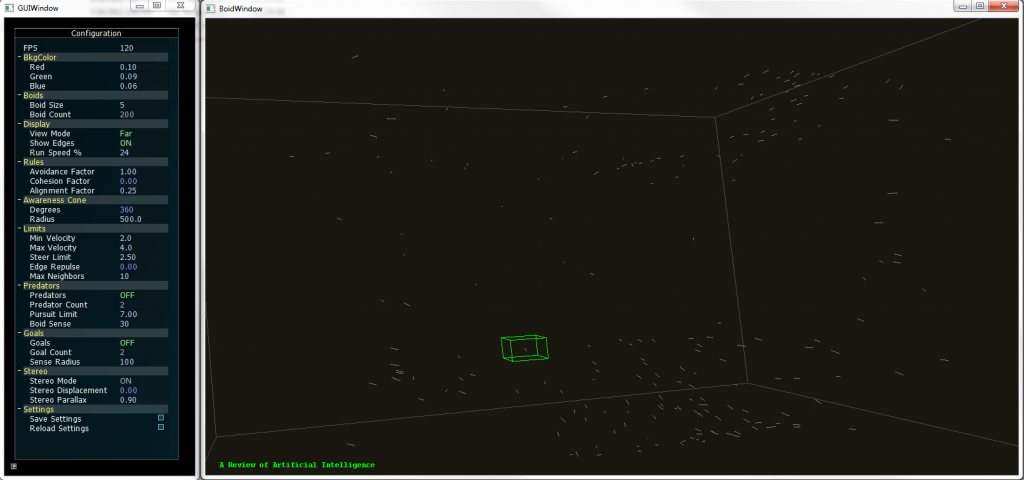

This year we started the development on this new version of the boids program. The idea: each element is a document, and they can be grouped by similarity.

In order to create such a thing now we needed a parser module that read documents like PDF files and create a data structure that provides the similarity between each of them. That was done entirely by a partner while I was focusing in the visualization itself.

As the paper describes the idea was to create a 4th rule to the Boids Algorithm that basically will direct the element to get nearer other similar elements. There are several ways to do this and they are described on the publication. We applied that idea along a modified algorithm designed by myself that easily takes the similarity values into cohesion like behavior. The results were pretty impressive after some tweaking on the other rules.

The new version supports full navigation and selection of individual elements. The title of the document can be seen while a selection is made.

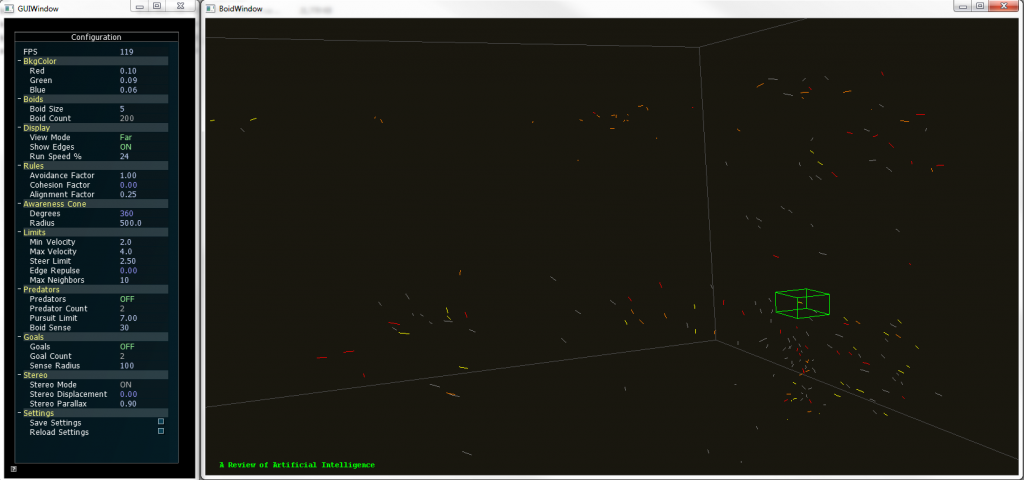

To provide more insight to the user on the visualization we developed a coloring code were the user simply selects a keyword and the documents that contain that keyword would be highlighted with a user selected color. Going beyond that, the user could select an element to show the title of that particular document and even open it from that interface.

We also added freeform movement and other interactions that let the user move around the 3D space.

Boids 2.0 supports coloring of up to two keywords, so the documents that contain a particular them are highlighted, if they contain both keywords they show a mixed color. All these features can be configured by the user in real time.

In other words, we developed a whole different way to browse documents and the application served as a proof of concept for a different take on visualizing documents. And we didn’t stop there.

Tune in next time to know how we added Kinect support to the whole thing!.

Not quite what you expected – Condition ONE

Posted: January 5, 2012 Filed under: Human and Computer Interaction, User Experience | Tags: App, Immersion, iPad, Not quite what you expected 1 Comment »It’s extremely surprising how in this industry we find innovation almost daily. Anyone that tries to stay updated with everything that happens will tell you how hard it is to do it. But in the end there are a lot of solutions that are based in an idea that on its implementation it’s not quite what the concept was aiming for.

Most of ideas will begin in something like “wouldn’t it be cool if we do this” and then from it derivate to something more specific and possible, something like “wouldn’t it be cool if do this for this line of users on this age range, with this particular need and this certain times of the day”. And yes, they seem well structured, and the evaluation for the technology needed seems to be a good fit. But in the interactive world particularly the evaluation or development of the technology needed or the content itself fails often and products are rushed to the market as good ideas but poor execution.

Recently I was checking on the Condition ONE app on the App Store. It’s an app that basically immerses you in different scenes where different things happen, mostly documentaries about certain events, reports, etc. The way this works is that the app plays a video that is interactive in a way that you can move the device anywhere and basically change the perspective of the camera. Having the 360 degree power to look at the surroundings of the happenings, trying to emulate the feeling of being there.

Take a look at the video below and you will get a good representation on how the offering works:

Condition ONE Demo from Danfung Dennis on Vimeo.

Awesome right?, not quite. I was thrilled to try it but I was already expecting some hiccups on the road. The thing is most of this type of application rely on accelerometers or gyroscopes (or both) that checks constantly for the position of the device. Most of portable products nowadays use it perfectly for detecting screen orientation and it works alright. Some even use compass features and sometimes the sensors are that reliable that you can some pretty precise work. Also we have seen it countless times on pedometer apps and games.

Now if you want to use this for something like controlling a perspective it might not be perfect. To begin with you need a reference or a point to be the horizon, and then form there on move the perspective. Some Wii games, for example, will suggest you to point to the center of the screen before interacting with the camera or simple tell you to point to the center and recalibrate the camera with a button. This app does that with a button called center. I know what it does, but not any common user that used the app.

After that it gets really annoying is not well made for the experience. You don’t have control over focus or depths so some scenes are way too close to look properly at something interesting and you end up missing the whole point. Also it was filmed with shaking hands and that creates a lot of disorientation if your device is not moving at all or doesn’t feel like is responding properly. This is very important if the Condition ONE team attempts to create immersion because this particulars problems make the user seems like it doesn’t have the control over its actions.

But this is not an entirely a bad thing because from mistakes there’s learning and from learning there’s success. This errors can be fixed to adapt to the technology present on the iPad easily with a fixed design and a 1.5 release of this app that could be usable and not just something to toy with a couple of minutes and then not ever launch it again. The calibrating issue is workable by teaching the user how to use the app, and the video issue could be tweaked to be more stable and well thought. Some more controlled documentaries could help to experiment a little better with this before going to the field. Also, make it really worthwhile to see the whole spectrum of the scene, and when you focus on something give cues to the user so it redirects its attention there.

Condition ONE team, you’ve got something pretty cool here, don’t drop it, polish it and make it shine!. Also, this would be so cool in a CAVE like environment!.