iTunes needs better previews

Posted: July 30, 2012 Filed under: User Experience | Tags: Apple, Fix, iTunes, Music Leave a comment »A person stands in its computer browsing for new songs to listen, she happens to open iTunes and checks her favorite artist. Their last album had one of the best singles ever and she’s curious on what’s in store on the rest of the album, and is good to know that she will be using 30 seconds previews of the songs to make her next purchase decisions. The problem is that any of the song feel right, they’re not “that good”. What about the song that she heard at the radio or at a friend’s house? its supposed to be on this, their newest album but still, can’t find it anywhere.

This might have occurred to you, but although previews are an awesome feature they require author control. Like promotional video, the cover of the album or even the shows, the artist executes a certain control on the whole process to archive what is desired in terms of entertainment or even art. None the less, previews on iTunes are either created automatically or by some people behind the scenes, not the actual artist, so who decides what’s there? Who decides what’s the most important or identifiable part of a song?

Like movie trailers can make you decide if you are going to watch a movie, this previews do the same. It’s extremely important that this continues evolving, favoring the artist and indirectly, the consumer.

Simple solution for this Apple: let the artist select if they want iTunes to generate the preview for them or let them customize it to their liking. How? Let them input the portions of the song they want to use as preview. Of course there are many ways to do this, by manually putting time or by having and simple editor that lets the author select the portion in an interactive way and preview it before sending it, and even letting the artist upload pre-authored previews. Not only this solves the problem, but artist can plan in advance for this kind of previews while they are in the studio and record it in a proper way. An example of this would be that the artist creates a very simplified rehearsal of the song, or an edited portion that actually highlights the signature moments of it.

One minor complaint on the awesome experience that digital distribution provides us, and by keeping the artist happy they will provide better content and the final users will benefit from it, and of course, your company. We need to keep evolving!

From Boids to Documents – Part 3

Posted: July 21, 2012 Filed under: Random Talk 2 Comments »If you haven’t read the previous parts check the out: Part1, Part2.

We went a step ahead an incorporated Kinect support. Why? Because it can augment perception and overall experience of the visualization and because it’s fun.

So we proceed to add head tracking coupled with 3D stereo and projected it using two high definition stereoscopic projectors. The results were amazing. Here’s a quick video that I made on my tablet, it shows the use of both the regular desktop interfaces and the Kinect one:

Imagine, head tracking changes perspective according to your body movements and the 3D glasses producing a good depth effect, it is exactly as if you were watching all these elements interacting though a real window.

The visualization team here at UTPA is really proud on what has been accomplished this summer, and we still have some other stuff coming, is not over yet!.

Next post: more about my nearly done Thesis and some Software Design (UX) personal projects that are in the oven.

From Boids to Documents – Part 2

Posted: July 20, 2012 Filed under: Human and Computer Interaction, Research | Tags: App, Boids, C++, HCI, Independent Elements, Publication, Research, Visualization, WinAPI 2 Comments »If you haven’t read Part 1 head there!.

This year we started the development on this new version of the boids program. The idea: each element is a document, and they can be grouped by similarity.

In order to create such a thing now we needed a parser module that read documents like PDF files and create a data structure that provides the similarity between each of them. That was done entirely by a partner while I was focusing in the visualization itself.

As the paper describes the idea was to create a 4th rule to the Boids Algorithm that basically will direct the element to get nearer other similar elements. There are several ways to do this and they are described on the publication. We applied that idea along a modified algorithm designed by myself that easily takes the similarity values into cohesion like behavior. The results were pretty impressive after some tweaking on the other rules.

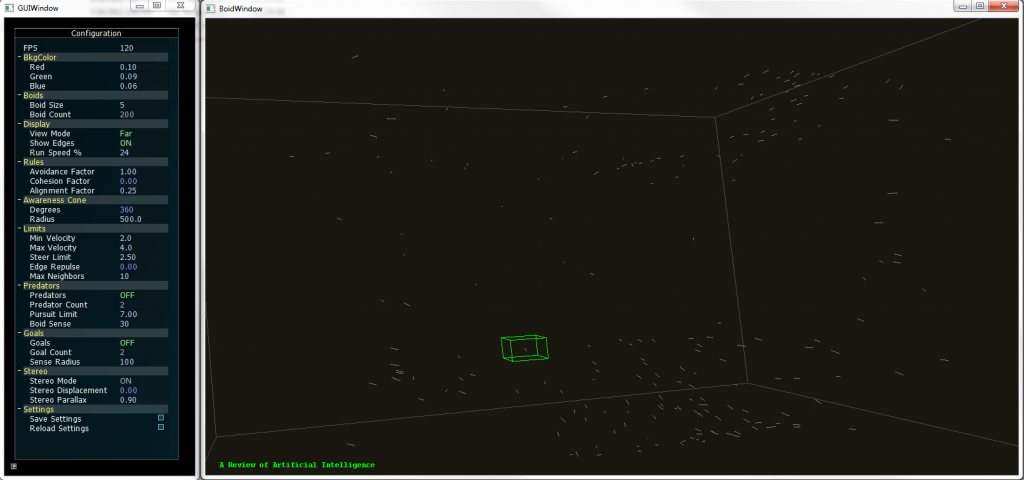

The new version supports full navigation and selection of individual elements. The title of the document can be seen while a selection is made.

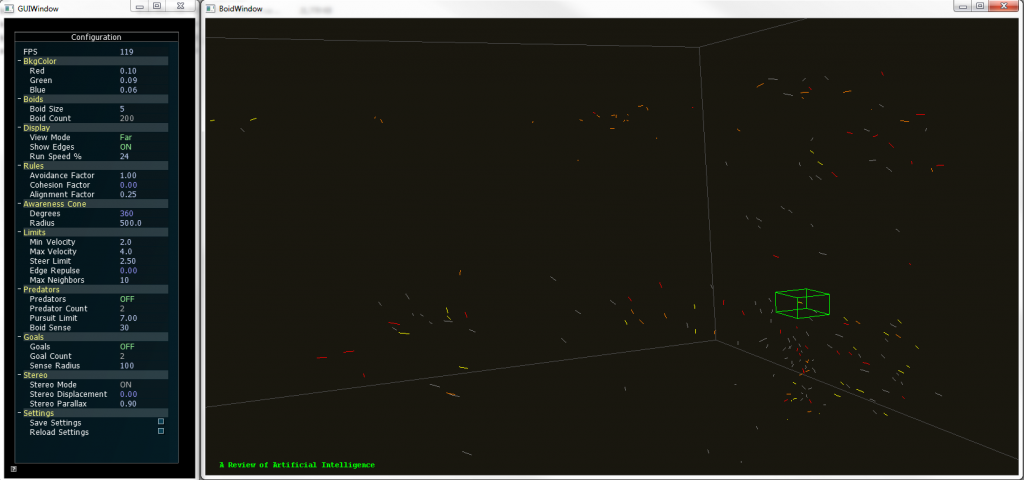

To provide more insight to the user on the visualization we developed a coloring code were the user simply selects a keyword and the documents that contain that keyword would be highlighted with a user selected color. Going beyond that, the user could select an element to show the title of that particular document and even open it from that interface.

We also added freeform movement and other interactions that let the user move around the 3D space.

Boids 2.0 supports coloring of up to two keywords, so the documents that contain a particular them are highlighted, if they contain both keywords they show a mixed color. All these features can be configured by the user in real time.

In other words, we developed a whole different way to browse documents and the application served as a proof of concept for a different take on visualizing documents. And we didn’t stop there.

Tune in next time to know how we added Kinect support to the whole thing!.

From Boids to Documents – Part 1

Posted: July 19, 2012 Filed under: Human and Computer Interaction, Research | Tags: Boids, C++, HCI, Independent Elements, OpenGL, Stereoscopy, Visualization, WinAPI 2 Comments »Here’s some information on the projects as promised.

On Summer 2010 I was approached by my HCI instructor, I have already told him about my interest in the area and that I feel good to go and start working on some projects. My instructor expertise relies on Data and Scientific Visualization and he had something beign cooked at the moment. A fellow student was working in a independent agents program that visualizes several objects in a 3D space with a very dynamic behavior inspired by nature: they tend to group and navigate in this space just like flocks of birds, swarm of insects and banks of fishes do, and they can react to their environment in different ways.

This “Boids” algorithm is very popular and is used in several graphical applications like videogames and movies due to its approximation to the real behavior of these animals without necessarily simulating the way nature does, all with very simple algorithm that is not heavy computationally speaking. Being it for real time or pre rendered presentations the technique really outstanding.

Sort of like that, get the idea?

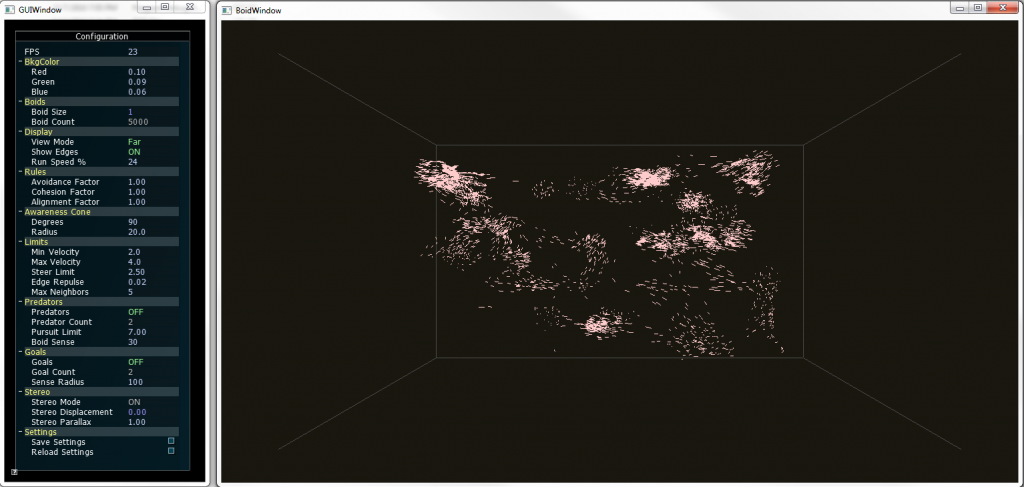

Well, I jumped into the project and did some optimization to the code and developed some interactions that were needed to further use the application in experimental setups, later we added 3D stereo to it using active shutter glasses, and let me tell you, watching thousands of elements floating out of the screen can be pretty amazing.

The previous picture shows about five thousand elements forming groups on 3D space. You could put other objects that can be either attract (food) or repel (predators) the boids, and you will see them reacting exactly as animals with similar behavior will do. Everything was done using C++, WinAPI and OpenGL. Project was finished and ready to go.

Fast-forward to 2012, a theoretical research was going on based on this technique: What if each element metaphorically represented a document? Well yes it would be a mess, but what if we can actually sort them out by some factor of similarity? No we are talking; we could visualize big structures of documents grouped together in clusters according to its similarity.

That’s what the newest paper is all about, and I’ll tell you how we developed a prototype in the next post.

New publications

Posted: July 9, 2012 Filed under: Human and Computer Interaction | Tags: HCI, Publication, Research, Visualization, VR 1 Comment »Next week some of the work done on my graduate research time will be published on the he 9th International Conference on Modeling, Simulation and Visualization Methods (MSV’12) and the 16th International Conference on Computer Graphics and Virtual Reality (CGVR’12). We have been hard working on some visualization techniques and some VR practices that are pretty exciting:

- Visualization and Clustering of Document Collections Using a Flock-based Swarm Intelligence Technique.

- Designing a Low Cost Immersive Environment System Twenty Years After the First CAVE.

My plan is that after the conference I’ll publish some related material on the site and the blog about the implementation of those ideas, so stay tuned for that.

Also, if you haven’t noticed, my we page is finally up. Still I have to upload more portfolio material. That will be fixed in the coming weeks.