Cell phones + Kinect = Good idea?

Posted: November 1, 2011 Filed under: Human and Computer Interaction, User Experience, User Interface | Tags: Concept, iPhone, Kinect, Motion Sensing, Natural User Interface, Smartphone, User Interface 2 Comments »

So yesterday I found a video about how technology like “Kinect” is reaching the cell phone market. Pantech is realizing a phone with this technology in Korea around November. Video of the commercial below:

First impression, pretty neat. Now let’s disassemble this idea.

In a practical sense there’s some stuff that are not being apparent here. When some it’s calling you could pick up a call easily by using a gesture as the ad shows, but when you are the one that wants to initiate a call it won’t work. Cell phones usually will turn off all their sensors when they are locked to save battery, there’s simply no way to keep the camera on for every time we want to perform a task with our phones, and as I said, this won’t be a problem for picking up a call since the phone it’s already awake when it rings, but when it’s locked or sleeping the phone is not listening to you all the time. Think of it as if you could use Siri in the iPhone 4S without touching the button.

But there’s definitive practical use for this, and might add it’s really a cool idea to add this functionality. Although have a camera that actively checking for hand gestures might be a real battery drain, there might be a way to build a decent camera that performs well the task without killing your battery every time you are using your phone.

My main problem with the video it’s the lack of vision and innovation. By reproducing gestures that already work well with touch you are just creating a gimmick, a device that it’s just for show off and adds very little to none to its purpose. Swiping photos by hovering your hand across the device doesn’t make it better, it’s just cooler, a factor that wears off in a week or less, and can actually get the user angry when the battery life will be inevitably shorter, they either introduce an great advancement in energy management or the phone will be pretty bulky.

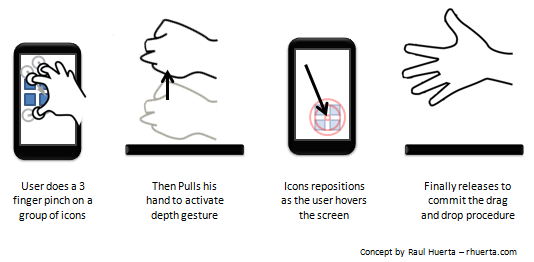

Still the idea it’s awesome, but it must be justified with great implementations, like upgrading current touching gestures or by creating useful gestures that can’t be done with current touch devices. A nice example will be to use a depth sensor to check how close or far the hand it’s from the device and make the GUI react to it as a function of those changes. For drag and dropping this could be perfect, imagine you could pinch into several objects to group them, then raise your hand, scroll around the GUI and the drop them whenever you like. This kind of example could be really uncomfortable to do on an “only touch” based device and a visual recognition approach could be useful.

That was just one example on what you could do with this kind of devices. Another would be to replace a lot of the 3D gestures that usually needs of an anchor, in other words, the use of two hands.

Of course, such a device would have to be planned well in all levels to achieve an integral solution, but I think feasible to create as a mass product in the near future. It’s a good idea but I don’t think the product shown in the ad will popularize it.

Have a setting or app where you could tell the console to keep Kinect on, with light off, and takes photo snaps if any movement is detected in the room during a designated time frame.

No sorry, my limited work with Kinect was mostly detecting the user’s movement. I guess Kinect could detect a human figure and you could react to it on code, but not sure how you could turn the light off.